Abstract

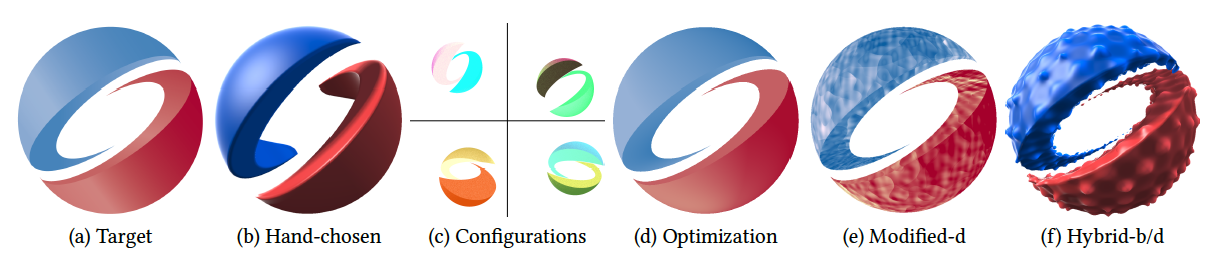

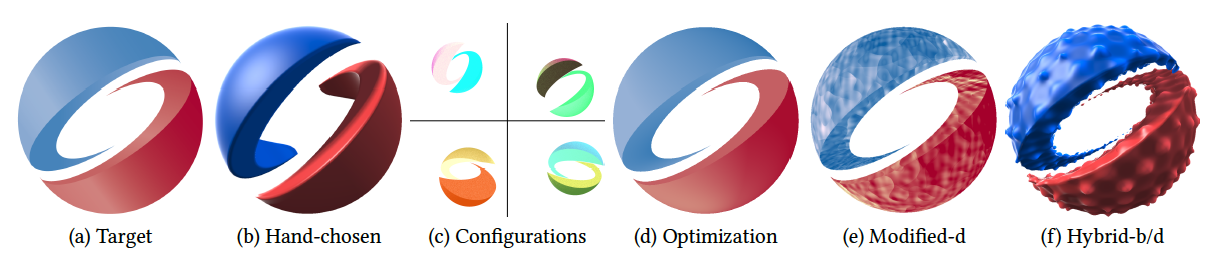

Over the last decade, automatic differentiation (AD) has profoundly impacted graphics and vision applications — both broadly via deep learning and specifically for inverse rendering. Traditional AD methods ignore gradients at discontinuities, instead treating functions as continuous. Rendering algorithms intrinsically rely on discontinuities, crucial at object silhouettes and in general for any branching operation. Researchers have proposed fully-automatic differentiation approaches for handling discontinuities by restricting to affine functions, or semi-automatic processes restricted either to invertible functions or to specialized applications like vector graphics. This paper describes a compiler-based approach to extend reverse mode AD so as to accept arbitrary programs involving discontinuities. Our novel gradient rules generalize differentiation to work correctly, assuming there is a single discontinuity in a local neighborhood, by approximating the pre-filtered gradient over a box kernel oriented along a 1D sampling axis. We describe when such approximation rules are first-order correct, and show that this correctness criterion applies to a relatively broad class of functions. Moreover, we show that the method is effective in practice for arbitrary programs, including features for which we cannot prove correctness. We evaluate this approach on procedural shader programs, where the task is to optimize unknown parameters in order to match a target image, and our method outperforms baselines in terms of both convergence and efficiency. Our compiler outputs gradient programs in both TensorFlow (for quick prototypes) and Halide with an optional auto-scheduler (for efficiency). The compiler also outputs GLSL that renders the target image, allowing users to modify and animate the shader, which would otherwise be cumbersome in other representations such as triangle meshes or vector art.

Links

Basic Introduction and Tutorial

If you are new to the concept of automatic differentation for discontinuous programs, these two Medium articles introduce the basics math and the DSL for the Adelta framework:

The tutorial below introduces how to author shaders programs and differentiate/optimize them under the Adelta framework:

- Part 1: Differentiating a Simple Shader Program

- Part 2: Raymarching Primitive

- Part 3: Animating the SIGGRAPH logo

- Part 4: Animating the Celtic Knot

Citation

Yuting Yang, Connelly Barnes, Andrew Adams and Adam Finkelstein.

"Aδ: Autodiff for Discontinuous Programs - Applied to Shaders."

SIGGRAPH, to appear, August 2022

, August, 2022.

Bibtex

@inproceedings{Yang:2022:Adelta,

author = "Yuting Yang and Connelly Barnes and Andrew Adams and Adam Finkelstein",

title = "A$\delta$: Autodiff for Discontinuous Programs - Applied to Shaders",

booktitle = "SIGGRAPH, to appear",

year = "2022",

month = aug

}